Best Practices for Model Deployment#

Introduction#

Model deployment is the step in a computer vision project that brings a model from the development phase into a real-world application. There are various model deployment options: cloud deployment offers scalability and ease of access, edge deployment reduces latency by bringing the model closer to the data source, and local deployment ensures privacy and control. Choosing the right strategy depends on your application’s needs, balancing speed, security, and scalability.

Watch: How to Optimize and Deploy AI Models: Best Practices, Troubleshooting, and Security Considerations

It’s also important to follow best practices when deploying a model because deployment can significantly impact the effectiveness and reliability of the model’s performance. In this guide, we’ll focus on how to make sure that your model deployment is smooth, efficient, and secure.

Model Deployment Options#

Often times, once a model is trained, evaluated, and tested, it needs to be converted into specific formats to be deployed effectively in various environments, such as cloud, edge, or local devices.

With respect to YOLO11, you can export your model to different formats. For example, when you need to transfer your model between different frameworks, ONNX is an excellent tool and exporting to YOLO11 to ONNX is easy. You can check out more options about integrating your model into different environments smoothly and effectively here.

Choosing a Deployment Environment#

Choosing where to deploy your computer vision model depends on multiple factors. Different environments have unique benefits and challenges, so it’s essential to pick the one that best fits your needs.

Cloud Deployment#

Cloud deployment is great for applications that need to scale up quickly and handle large amounts of data. Platforms like AWS, Google Cloud, and Azure make it easy to manage your models from training to deployment. They offer services like AWS SageMaker, Google AI Platform, and Azure Machine Learning to help you throughout the process.

However, using the cloud can be expensive, especially with high data usage, and you might face latency issues if your users are far from the data centers. To manage costs and performance, it’s important to optimize resource use and ensure compliance with data privacy rules.

Edge Deployment#

Edge deployment works well for applications needing real-time responses and low latency, particularly in places with limited or no internet access. Deploying models on edge devices like smartphones or IoT gadgets ensures fast processing and keeps data local, which enhances privacy. Deploying on edge also saves bandwidth due to reduced data sent to the cloud.

However, edge devices often have limited processing power, so you’ll need to optimize your models. Tools like TensorFlow Lite and NVIDIA Jetson can help. Despite the benefits, maintaining and updating many devices can be challenging.

Local Deployment#

Local Deployment is best when data privacy is critical or when there’s unreliable or no internet access. Running models on local servers or desktops gives you full control and keeps your data secure. It can also reduce latency if the server is near the user.

However, scaling locally can be tough, and maintenance can be time-consuming. Using tools like Docker for containerization and Kubernetes for management can help make local deployments more efficient. Regular updates and maintenance are necessary to keep everything running smoothly.

Model Optimization Techniques#

Optimizing your computer vision model helps it runs efficiently, especially when deploying in environments with limited resources like edge devices. Here are some key techniques for optimizing your model.

Model Pruning#

Pruning reduces the size of the model by removing weights that contribute little to the final output. It makes the model smaller and faster without significantly affecting accuracy. Pruning involves identifying and eliminating unnecessary parameters, resulting in a lighter model that requires less computational power. It is particularly useful for deploying models on devices with limited resources.

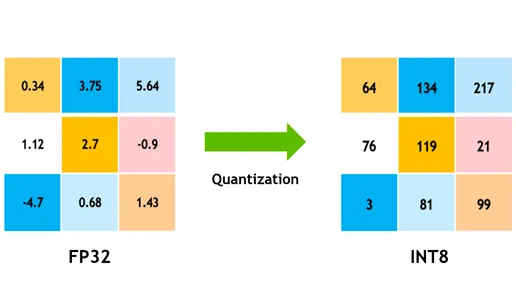

Model Quantization#

Quantization converts the model’s weights and activations from high precision (like 32-bit floats) to lower precision (like 8-bit integers). By reducing the model size, it speeds up inference. Quantization-aware training (QAT) is a method where the model is trained with quantization in mind, preserving accuracy better than post-training quantization. By handling quantization during the training phase, the model learns to adjust to lower precision, maintaining performance while reducing computational demands.

Knowledge Distillation#

Knowledge distillation involves training a smaller, simpler model (the student) to mimic the outputs of a larger, more complex model (the teacher). The student model learns to approximate the teacher’s predictions, resulting in a compact model that retains much of the teacher’s accuracy. This technique is beneficial for creating efficient models suitable for deployment on edge devices with constrained resources.

Troubleshooting Deployment Issues#

You may face challenges while deploying your computer vision models, but understanding common problems and solutions can make the process smoother. Here are some general troubleshooting tips and best practices to help you navigate deployment issues.

Your Model is Less Accurate After Deployment#

Experiencing a drop in your model’s accuracy after deployment can be frustrating. This issue can stem from various factors. Here are some steps to help you identify and resolve the problem:

Check Data Consistency: Check that the data your model is processing post-deployment is consistent with the data it was trained on. Differences in data distribution, quality, or format can significantly impact performance.

Validate Preprocessing Steps: Verify that all preprocessing steps applied during training are also applied consistently during deployment. This includes resizing images, normalizing pixel values, and other data transformations.

Evaluate the Model’s Environment: Ensure that the hardware and software configurations used during deployment match those used during training. Differences in libraries, versions, and hardware capabilities can introduce discrepancies.

Monitor Model Inference: Log inputs and outputs at various stages of the inference pipeline to detect any anomalies. It can help identify issues like data corruption or improper handling of model outputs.

Review Model Export and Conversion: Re-export the model and make sure that the conversion process maintains the integrity of the model weights and architecture.

Test with a Controlled Dataset: Deploy the model in a test environment with a dataset you control and compare the results with the training phase. You can identify if the issue is with the deployment environment or the data.

When deploying YOLO11, several factors can affect model accuracy. Converting models to formats like TensorRT involves optimizations such as weight quantization and layer fusion, which can cause minor precision losses. Using FP16 (half-precision) instead of FP32 (full-precision) can speed up inference but may introduce numerical precision errors. Also, hardware constraints, like those on the Jetson Nano, with lower CUDA core counts and reduced memory bandwidth, can impact performance.

Inferences Are Taking Longer Than You Expected#

When deploying machine learning models, it’s important that they run efficiently. If inferences are taking longer than expected, it can affect the user experience and the effectiveness of your application. Here are some steps to help you identify and resolve the problem:

Implement Warm-Up Runs: Initial runs often include setup overhead, which can skew latency measurements. Perform a few warm-up inferences before measuring latency. Excluding these initial runs provides a more accurate measurement of the model’s performance.

Optimize the Inference Engine: Double-check that the inference engine is fully optimized for your specific GPU architecture. Use the latest drivers and software versions tailored to your hardware to ensure maximum performance and compatibility.

Use Asynchronous Processing: Asynchronous processing can help manage workloads more efficiently. Use asynchronous processing techniques to handle multiple inferences concurrently, which can help distribute the load and reduce wait times.

Profile the Inference Pipeline: Identifying bottlenecks in the inference pipeline can help pinpoint the source of delays. Use profiling tools to analyze each step of the inference process, identifying and addressing any stages that cause significant delays, such as inefficient layers or data transfer issues.

Use Appropriate Precision: Using higher precision than necessary can slow down inference times. Experiment with using lower precision, such as FP16 (half-precision), instead of FP32 (full-precision). While FP16 can reduce inference time, also keep in mind that it can impact model accuracy.

If you are facing this issue while deploying YOLO11, consider that YOLO11 offers various model sizes, such as YOLO11n (nano) for devices with lower memory capacity and YOLO11x (extra-large) for more powerful GPUs. Choosing the right model variant for your hardware can help balance memory usage and processing time.

Also keep in mind that the size of the input images directly impacts memory usage and processing time. Lower resolutions reduce memory usage and speed up inference, while higher resolutions improve accuracy but require more memory and processing power.

Security Considerations in Model Deployment#

Another important aspect of deployment is security. The security of your deployed models is critical to protect sensitive data and intellectual property. Here are some best practices you can follow related to secure model deployment.

Secure Data Transmission#

Making sure data sent between clients and servers is secure is very important to prevent it from being intercepted or accessed by unauthorized parties. You can use encryption protocols like TLS (Transport Layer Security) to encrypt data while it’s being transmitted. Even if someone intercepts the data, they won’t be able to read it. You can also use end-to-end encryption that protects the data all the way from the source to the destination, so no one in between can access it.

Access Controls#

It’s essential to control who can access your model and its data to prevent unauthorized use. Use strong authentication methods to verify the identity of users or systems trying to access the model, and consider adding extra security with multi-factor authentication (MFA). Set up role-based access control (RBAC) to assign permissions based on user roles so that people only have access to what they need. Keep detailed audit logs to track all access and changes to the model and its data, and regularly review these logs to spot any suspicious activity.

Model Obfuscation#

Protecting your model from being reverse-engineered or misuse can be done through model obfuscation. It involves encrypting model parameters, such as weights and biases in neural networks, to make it difficult for unauthorized individuals to understand or alter the model. You can also obfuscate the model’s architecture by renaming layers and parameters or adding dummy layers, making it harder for attackers to reverse-engineer it. You can also serve the model in a secure environment, like a secure enclave or using a trusted execution environment (TEE), can provide an extra layer of protection during inference.

Conclusion and Next Steps#

We walked through some best practices to follow when deploying computer vision models. By securing data, controlling access, and obfuscating model details, you can protect sensitive information while keeping your models running smoothly. We also discussed how to address common issues like reduced accuracy and slow inferences using strategies such as warm-up runs, optimizing engines, asynchronous processing, profiling pipelines, and choosing the right precision.

After deploying your model, the next step would be monitoring, maintaining, and documenting your application. Regular monitoring helps catch and fix issues quickly, maintenance keeps your models up-to-date and functional, and good documentation tracks all changes and updates. These steps will help you achieve the goals of your computer vision project.

FAQ#

What are the best practices for deploying a machine learning model using Ultralytics YOLO11?#

Deploying a machine learning model, particularly with Ultralytics YOLO11, involves several best practices to ensure efficiency and reliability. First, choose the deployment environment that suits your needs—cloud, edge, or local. Optimize your model through techniques like pruning, quantization, and knowledge distillation for efficient deployment in resource-constrained environments. Lastly, ensure data consistency and preprocessing steps align with the training phase to maintain performance. You can also refer to model deployment options for more detailed guidelines.

How can I troubleshoot common deployment issues with Ultralytics YOLO11 models?#

Troubleshooting deployment issues can be broken down into a few key steps. If your model’s accuracy drops after deployment, check for data consistency, validate preprocessing steps, and ensure the hardware/software environment matches what you used during training. For slow inference times, perform warm-up runs, optimize your inference engine, use asynchronous processing, and profile your inference pipeline. Refer to troubleshooting deployment issues for a detailed guide on these best practices.

How does Ultralytics YOLO11 optimization enhance model performance on edge devices?#

Optimizing Ultralytics YOLO11 models for edge devices involves using techniques like pruning to reduce the model size, quantization to convert weights to lower precision, and knowledge distillation to train smaller models that mimic larger ones. These techniques ensure the model runs efficiently on devices with limited computational power. Tools like TensorFlow Lite and NVIDIA Jetson are particularly useful for these optimizations. Learn more about these techniques in our section on model optimization.

What are the security considerations for deploying machine learning models with Ultralytics YOLO11?#

Security is paramount when deploying machine learning models. Ensure secure data transmission using encryption protocols like TLS. Implement robust access controls, including strong authentication and role-based access control (RBAC). Model obfuscation techniques, such as encrypting model parameters and serving models in a secure environment like a trusted execution environment (TEE), offer additional protection. For detailed practices, refer to security considerations.

How do I choose the right deployment environment for my Ultralytics YOLO11 model?#

Selecting the optimal deployment environment for your Ultralytics YOLO11 model depends on your application’s specific needs. Cloud deployment offers scalability and ease of access, making it ideal for applications with high data volumes. Edge deployment is best for low-latency applications requiring real-time responses, using tools like TensorFlow Lite. Local deployment suits scenarios needing stringent data privacy and control. For a comprehensive overview of each environment, check out our section on choosing a deployment environment.